Unless you've been living under a rock for the last couple months, AI has been writing the majority of code for almost everyone now. And we've seen the results. Claude Code had a single “Nine” of uptime in March 2026 (less than 99% uptime). The industry standard is three (99.9%) or more nines for more critical services. GitHub is so unreliable such that Mitchell Hashimoto, founder of Hashicorp and Ghostty Terminal, is moving his terminal project off of GitHub to a more reliable service. Lots of services are taking downtime right now because we are in a very painful industry-wide learning curve where we are still trying to figure out how to write reliable code with AI.

It's not just the early adopters who are writing code now. It's the middle majority, 70% of people, who are starting to use these tools, installing Cursor for the first time or firing up Claude to write their own applications. Hell, even my own non-technical brother has been vibe coding applications for his digital marketing consulting services over the past three months. I have not helped him write a single line. I have only chatted with him over iMessage, and you can assume how good coding is over that (it’s not). He is completely enabled by Claude Code to become a software developer, which is insane. I'm not threatened by this because I have way more years of experience than my brother (or equivalent non-technical person coding for the first time) in creating software. I know where the bodies are buried and where things can go wrong.

Where the Bodies are Buried

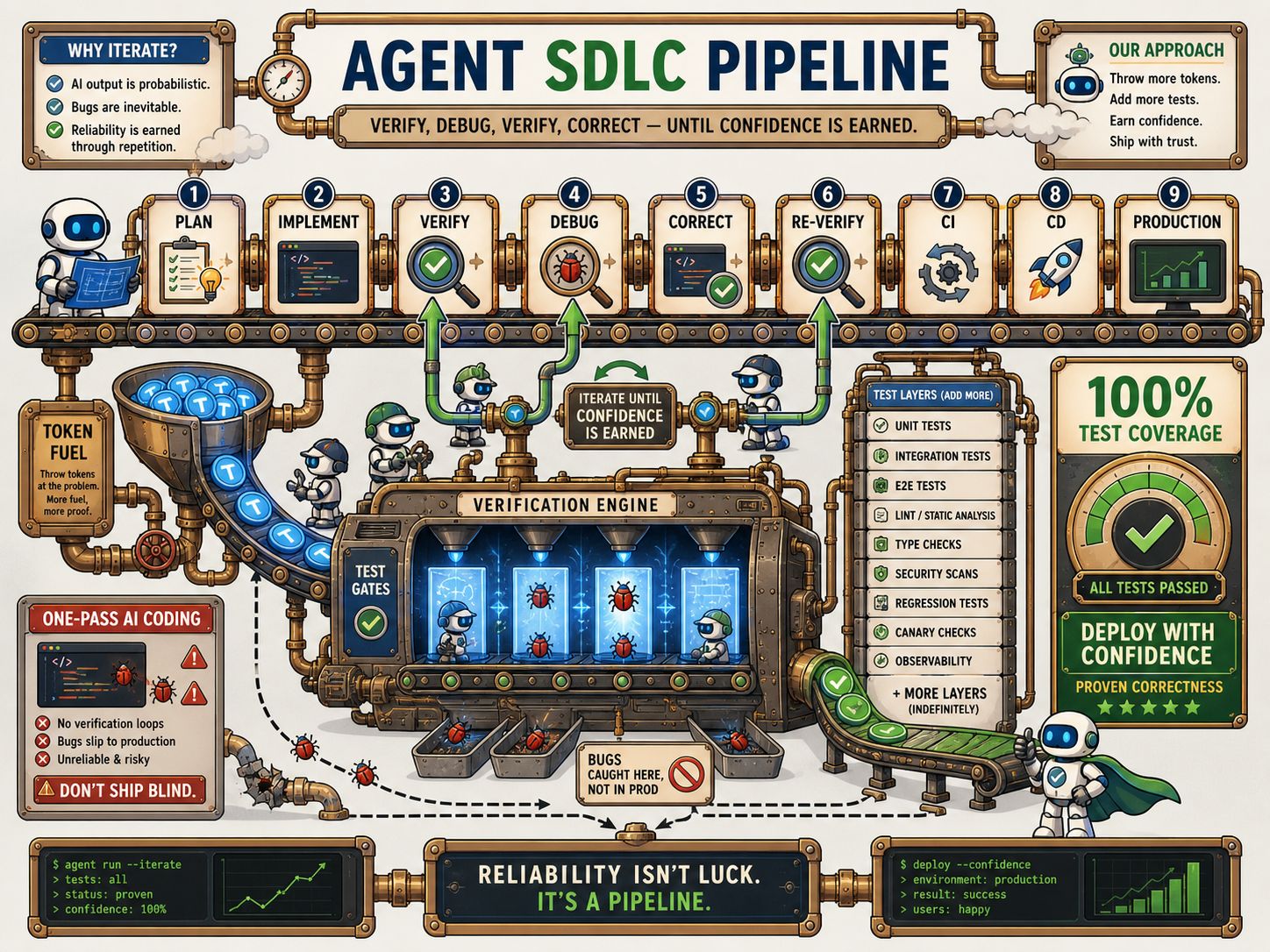

It just gets me thinking about how do I improve his vibe coding process? I think it's my and other software engineers' job to demonstrate and enforce battle-tested frameworks. Things like version control with git, CI/CD, TDD, tracer bullet implementations, basically all the lessons that we've learned through trying and failing at software engineering up until this point. How did we develop reliable software with many different people touching the same codebase at the same time?

The answer is in preventative and reactive “DevOps” practices. Preventative is what I listed above in the pre-deployment phase (git, CI/CD, TDD, etc). Reactive practices are used when code is in production, such as observability (logs, metrics, traces, alerts, etc). Now we're relying on these same systems for AI-written code.

People think that humans will continue to be in the loop for reviewing code line-by-line, even though the next billion-dollar company is likely being created right now by one person who hasn't read a single line of their code. Agents produce multiple orders of magnitude more code, more easily than any human ever has before. Value doesn’t care if a human has read the code or not, just that it works.

The current systems that we're using for verifying human-written code and will likely fall short for systems that are being built exclusively with AI-written code. The cool thing is that we can point our AI agents at the preventative and reactive DevOps systems to make them even better. Doing the existing thing to the nth degree is one way to improve, but I think we need to potentially pull in some new (old) methods.

If you haven't already, you can join our Discord to connect with other like-minded AI-enabled software engineers and discuss the latest trends, tips, tricks, and techniques.

It's a great place to further explore topics that we discuss on the live stream and where I put all of the links that we pull up every stream.

A Ghost from Christmas Past

This week I just came across an article that was written in December 2025 about something I first heard about in college called “formal verification”. The idea is that we can mathematically prove[1] that code works in a very specific, known, trusted, deterministic way. I first learned about it in college from a professor I had freshman year, Paul Hatalsky. He talked about how he used formal verification when he was coming up to prove that a chess solver program he wrote was actually correct, and was doing the most optimal move for a given board configuration (to the best of my memory of the story). This is a well-known part of computer science that people study for a class and then promptly forget about it once they get into industry. That is, unless they are in very specific, mission-critical use case like encryption or proving low-level systems like CPUs to be absolutely deterministically correct.

Guess who has a code verification problem now? Everyone.

I am doing a test run of using formal verification frameworks with two Twitter clones in golang and Rust. I want to see what the process is like for adding formal verification as a stage to normal app development. The cost of code is going to zero and compute is very cheap as well. Any incremental extra stage in the SLDC process essentially doesn't matter, and is “close to free”, especially when you're selling that software for an astronomical margin.

We can add any number of stages or any number of processes, and it almost doesn't matter, because the AI agent is doing everything anyway. The goal is to not look at the code, right? What's it to you for adding formal verification tests, plus 100% code coverage for unit tests, 100% coverage in integration tests, and 100% coverage in functional system automation tests?

These were inhuman standards to keep before, but now that we are writing code that is inhuman, we need inhuman-level testing. I believe formal verification could be the last leg of this table of testing, rather than a tripod stool (unit, integration, functional system) of testing.

We now can potentially throw in a formal verification stage into every CI/CD pipeline just for funsies and it basically doesn't matter. We don't need to worry about how much additional time or tokens it takes. If we can improve the “One Nine” reliability problem of taking non-deterministic AI-generated code and getting it to reach a deterministic end goal state, we’ll have unlocked accessible software development for everyone.

If you're an avid reader of this newsletter, you'll know that I view vibe coding from a constraints-led approach (CLA) perspective. We can use formal verification frameworks to help constrain what the output looks like. All these different types of test methodologies are just ways for us to constrain the potential outputs of the non-deterministic AI system. If we give agents a more and more well-defined target goal to aim towards, it will become more and more likely to hit it.

AI-in-depth, or: AI all the way down

Think of Defense In-Depth in cyber security, but for preventing bad code from going out to production. The idea is that we want many different layers of security and not have any single point of failure that can give access to the entire system. If one layer fails, there are other layers that kick in that aren't dependent on the failed layer. The idea is that nothing can fall through all of the cracks. For someone to break into a system, many things would have to go wrong simultaneously. The most common example of this that people encounter are passwords and multi-factor authentication.

Code quality is now mediated by how many tokens you throw at the problem. I believe we can create a many-layered (and looping) process that guides those tokens in the most useful way possible. I want to help Vibe Coders focus on solving business domain problems, rather than worrying about how reliable their code is or even the process it goes through for reaching production.

This is a motivation for my DIALED framework. I want to be able to point people like my brother to a sane set of real, “dialed in” software engineering defaults. The repo gives their agents the vocabulary they need to do the best practices that newbie vibe coders may not even know the words to prompt for. Right now, the framework is just a two-tier AWS account deployment in terraform where every PR can spin up a new stack. On merge to main, it gets deployed to an integration dev stack where we run tests, and then deployed to production. I can imagine adding additional layers of testing to this framework as we discover them.

We want as many hard (gates) and soft (steering on laptop) guardrails that the AI just can iterate on as possible. Anything that frustrates a human does not frustrate an AI. AIs have unlimited patience and are willing to sit through many rounds of design, code, and verification review arguing back and forth with other models. They tirelessly iterate towards a given goal. They used to cheat with trying to get things done within a context window, but I honestly haven't thought of a context window in months. I think Agent Harnesses have gotten that good.

Agentic Convergence

“Convergence” is a cool-sounding word that people throw around, but it’s really just agents debating back and forth, negotiating, and agreeing or disagreeing on plans, implementation, testing, etc, much like humans do. Readers with a leftist persuasion may recognize this as “dialectics”.

I created my own convergence skill this morning that I first thought of back in Thanksgiving. Back then, I thought was going to be a huge undertaking with mostly golang code. I called it “Buckshot”. Now, it's taken me less than 30 mins with a simple “hey claude, let’s make a skill where we call Codex to go back and forth with Claude until they agree or hit a deadlock” to create it and then I have iteratively made it better and better. Lots of things that used to be apps are now skills like my app “Roxas”, which is now a set of Claude social media skills (and much easier that way).

It's also way safer to generate your own skills than to use some random public skills that somebody else wrote. Who knows what kind of prompt injections lie in wait for you in random public repositories. Describe the behavior you want and point at maybe some trusted repos like those owned by Anthropic, Open AI, or well known third parties like Garry Tan's GStack as examples, and then it's off to the races.

Main takeaway here is that Big Model doesn’t want you to realize that their agents work well together. They would rather own your entire token spend than have you split it between providers. I think the future is here and it is multi-model. Claude and Codex are both very good, and are better when working together than on their own.

Twitter Clone Results

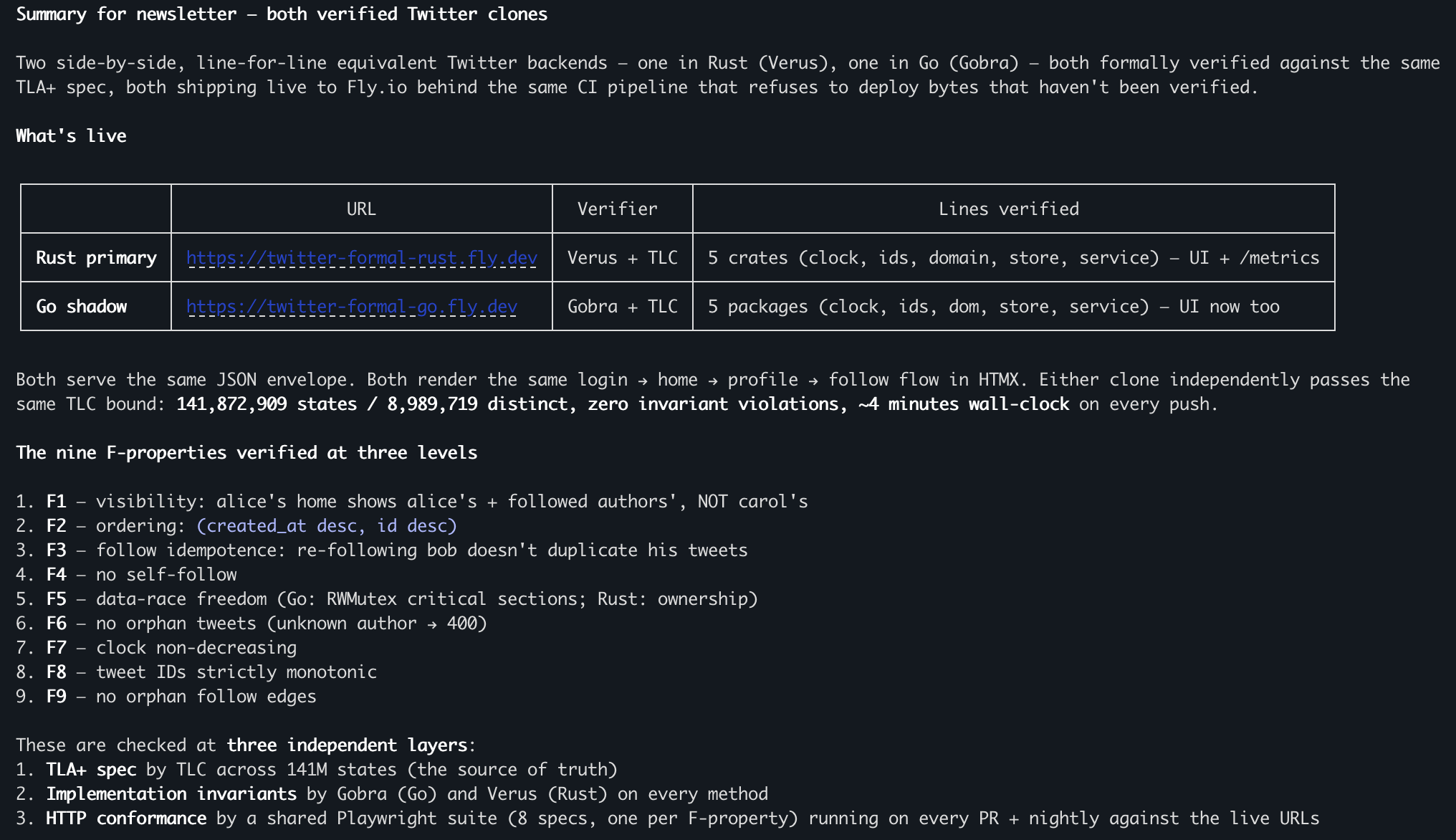

I created two twitter clones (Rust and golang) this weekend to test out the premise of using multi-model convergence around planning, implementation, and testing. Plus I also wanted to test formal verification frameworks.

Here’s how Claude summarized what it’s done so far:

Both clones are working from the same formal specification repo and have equivalent behavior. The next step for me is to scale this up with bigger and bigger applications. I'll let you know how it goes.

Mo’ Process, Mo’ Results

These Twitter clones are an example of how we can build out a completely battle-tested end-to-end process, maximizing the tokens that we use in smart ways. I ran out of $200/mo Claude session usage in this process and had to switch over to Codex a main driver for a bit.

We want to maximize the layers of soft and hard guardrails. Soft guardrails are anything that the developer does on their laptop before the code goes up to GitHub. The hard guardrails are everything that happens in the CI/CD pipeline that is enforced before the code gets merged into the rest of the codebase and deployed to production.

It is my hypothesis that humans are less special (or needed) than we think we are when it comes to automatable skills. That isn’t to say that it’s bad to take pride in your work. We even identify with who we become through our work. We learn a lot about ourselves through trials and tribulations that mold us into someone who can ascend a (corporate or otherwise) skill hierarchy.

At the same time, processes and technology has always made frontline workers more fungible and interchangeable from the perspective of higher levels of human organization. This can be scary, but I encourage you to make yourself not fungible. Figure out your own unique perspective on how you can use this technology and create new value for others with it. You’re reading mine right now.

Using AI tooling is now table stakes. I’m doing my best to go all-in.

[1] With a mathematical construct called a “proof”, which you may remember from high school geometry